AI Has a Networking Problem. Cisco Saw It Coming.

*Cisco made a silicon bet before AI existed. Now that bet is a structural moat. Here's why the networking problem at the heart of enterprise AI is exactly the problem Cisco spent years quietly solving — and what that means for practitioners making infrastructure decisions today.*

Between Two Events — Data Center & AI Infrastructure

The Cisco story right now is genuinely interesting. That's not a promotional statement — it's an analytical one, and it comes with a catch.

Senior leadership is telling a coherent story. The transformation narrative is real, and the technology is increasingly behind it. But there's a gap between the executive keynote version of Cisco and the domain-specific product briefing version — and most practitioners are living somewhere in the middle, trying to figure out which one to trust. Walk into a Cisco session and you'll hear either a unified AI platform play with security everywhere and the network as the engine of everything, or a dense technical briefing that assumes you already know which business unit you came to see. Both are accurate. Neither one connects the dots. And right now, with enterprises making real AI infrastructure decisions with real budget, that gap has a cost.

Cisco Live US is close enough now that the platform story is about to be tested in public — across every room simultaneously. This post is my attempt to frame the argument before the keynotes do it for me.

Not a product tour. An argument.

The bet that looked early

Before we get to the platform story, we need to talk about silicon.

Cisco has always been a systems company with ASICs deep in its DNA. Custom chips are in the Catalyst 6500, the Nexus 9000 — core to how Cisco builds differentiation. But in 2019, Cisco made a different kind of decision: they would build a unified silicon architecture, a single ASIC family designed to span every product line from service provider core to enterprise access, and eventually sell it as merchant silicon to other vendors. That's Silicon One. The original challenge it was built to address was commoditization — Broadcom's Tomahawk chips were eating into Cisco's switching business, and white-box hardware was a real threat. Silicon One was Cisco's answer: own the silicon, own the differentiation.

That battle with Broadcom is still very much active. Tomahawk 5 and Jericho3-AI chips power a massive portion of AI networks today. Cisco hasn't won that war. But something happened that changed the stakes considerably.

I covered the historical logic behind this bet in detail last fall — the ATM wars, Token Ring, Fibre Channel, and why Cisco has run this exact playbook three times before. The short version: the pattern holds. The G300 is the clearest proof point.

AI changed the physics of networking.

Training large language models or running inference at enterprise scale isn't like serving web traffic. It's thousands of GPUs that need to talk to each other constantly, simultaneously, with almost zero tolerance for packet loss. The "all-to-all" communication pattern that drives distributed AI training creates synchronized, bursty traffic that overwhelms traditional network designs. A brief stall event that would be imperceptible in a web application can idle an entire GPU cluster. When GPUs cost thousands of dollars per hour to run, idle time is a financial event.

This is, at its core, a networking problem. And Cisco had been building the silicon to address a class of scale-out networking problems before the enterprise AI market fully understood it had one.

The Silicon One G300, announced at Cisco Live EMEA in Amsterdam this past February, is the current proof point: 102.4 terabits per second of Ethernet switching capacity in a single chip, with what Cisco describes as an industry-leading fully shared packet buffer — detailed in their ICN white paper. Cisco claims up to 33% better network utilization and 28% faster job completion times in its own G300/ICN comparisons — but those numbers need a translation to mean anything to a practitioner.

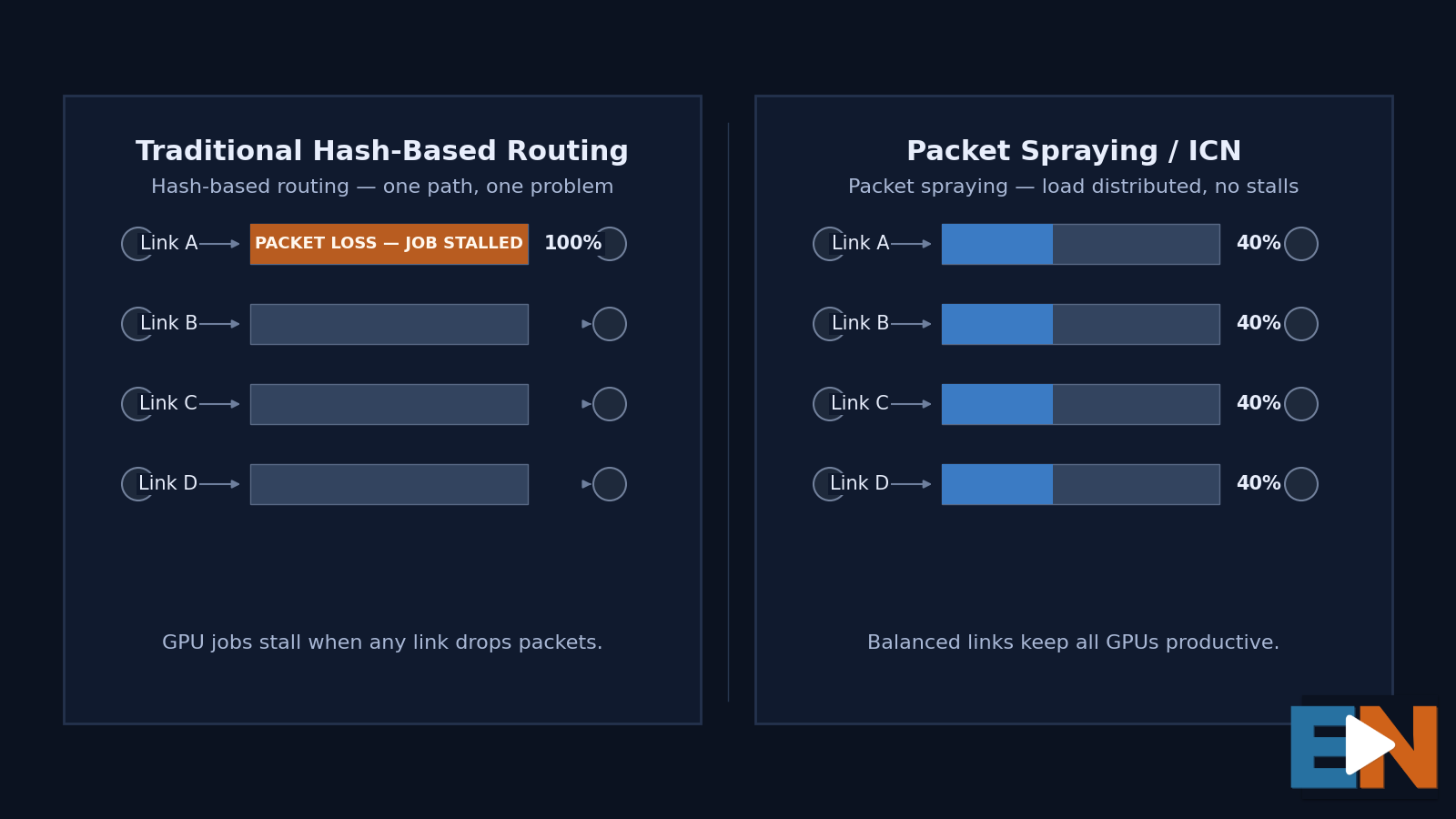

"Network utilization" sounds like an old metric for a new problem. In AI infrastructure, it's measuring something specific: whether your fabric is choking on itself. Traditional hash-based routing sends traffic flows down predetermined paths. In an AI cluster, this creates a brutal imbalance — one link jammed at 100% and dropping packets while three other links sit empty. The G300's Intelligent Collective Networking (ICN) approach distributes flows across all available paths simultaneously using packet spraying, shared congestion awareness across every chip in the fabric, and proactive telemetry that detects problems before they become stalls. The goal is to make all available paths useful, reduce hot spots, absorb bursts, and prevent the kind of packet loss or congestion collapse that idles GPUs. If you want to go deeper on the TCO math, Cisco's ICN white paper walks through it.

When Cisco claims 33% better utilization, they mean: you're getting your money's worth out of every wire in the rack.

The more interesting number is still the timing. Cisco made this bet before most of the enterprise AI conversation existed. That's not luck — it's the kind of investment that only makes sense if you believe networking is going to matter more, not less, as compute scales. The moat that looked theoretical in 2019 is structural in 2026.

Why Ethernet, and why it matters for risk

Here's where practitioners should pay close attention, because this is actually a risk conversation dressed up as a technology one.

The alternative to Cisco's Ethernet approach is NVIDIA's InfiniBand ecosystem — purpose-built for GPU clusters, excellent at what it does, and operationally foreign to most enterprise IT teams. InfiniBand requires specialized skills, separate management tooling, and an operational model more familiar to hyperscale, HPC, and specialized AI infrastructure teams than to traditional enterprise IT. Most enterprises don't have that bench. And in an environment already full of existential unknowns — AI ROI uncertainty, budget pressure, organizational readiness gaps — adding a new networking paradigm to the list of things your team has to learn is a real cost, even if it never shows up in the proposal.

Cisco's argument — and ICN is the substance behind it — is that enterprises don't have to choose between AI-ready networking and operational familiarity. Familiar Ethernet foundation. Familiar operational concepts. New performance characteristics under the hood. The learning curve is lower than introducing a parallel networking paradigm, even if the management stack is still evolving.

For risk-averse IT leaders trying to move AI from pilot to production, that's not a minor point. It's close to the whole decision.

The honest caveat: the G300 is a second-half 2026 shipping story. The current AI infrastructure spending wave is happening now. Cisco is well-positioned for the next wave — enterprise production deployments, sovereign AI, distributed inference — but timing matters and the gap is real. Enterprises shopping today are making decisions before the full Silicon One story is shippable.

The Secure AI Factory: a system, not a product

The organizing concept Cisco has built around this silicon is the Secure AI Factory with NVIDIA — and the phrase is worth unpacking carefully, because it's easy to hear "product" when Cisco means something different.

It's a pre-validated architecture: a complete, tested blueprint for enterprise AI infrastructure specifying how UCS compute, Nexus networking, partner storage, Kubernetes orchestration, NVIDIA AI software, and Cisco security fit together. The "factory" metaphor is deliberate. A factory doesn't just have machines — it has an organized production process, quality controls, and a predictable operational rhythm. Cisco is arguing that enterprises need to approach AI infrastructure the same way: as a system designed to produce AI outcomes reliably, not a collection of hardware you integrate yourself and hope works.

Here's the part that matters most for practitioners making budget decisions: when you buy point products and assemble them yourself, you own the integration problem. Every gap, every incompatibility, every configuration that works in testing and fails in production — that's yours. When you deploy a validated platform, the vendor has already owned those problems. In practical buyer terms, Cisco's pre-validated architectures are a transfer of integration risk, not just a convenience. That's a different value proposition than a product catalog, and it's the reason the platform story is worth taking seriously even when individual components aren't yet fully shipped.

The "Secure" in the name is doing real work — but it's worth being precise about what that means, because it's not a claim that traditional security is obsolete.

AI introduces attack surfaces that traditional enterprise security wasn't designed to handle on its own: models that can be poisoned, agents that can be hijacked, data pipelines that can leak sensitive context, MCP servers and agentic toolchains — the connectors and tool-use layers that let agents reach outside the model — that create new supply-chain exposure. Identity, segmentation, firewalling, posture, detection, and observability all still matter. But AI infrastructure adds a new control plane where security has to follow workloads, models, agents, and data flows deep inside the compute fabric — not just the network perimeter.

That's the greenfield departure. Hypershield for distributed fabric-level enforcement, AI Defense for model and agent protection, Isovalent for Kubernetes — these aren't extensions of the traditional firewall-and-perimeter model. They're a newer security architecture aimed at runtime enforcement, workload behavior, and AI-specific risk. Cisco's latest move extends Hybrid Mesh Firewall enforcement to NVIDIA BlueField DPUs inside GPU servers themselves. The policy travels with the workload, not the boundary.

The honest friction point is operational. A security team used to Cisco Firepower, ISE, or traditional network segmentation will find that the AI Factory security layer introduces a parallel set of tools, subscriptions, and operating motions. Hypershield and AI Defense are not the next release of legacy products — they're newer overlays for a newer problem. That may be exactly what AI infrastructure requires. It also creates a real adoption gap for teams managing both environments simultaneously.

Portfolio completeness matters here too. The story is strongest when Cisco brings networking, compute, security, Kubernetes visibility, and observability together as one operational model. Without Splunk, the telemetry layer gets thin. Without AI Defense, the "Secure" claim rests more heavily on infrastructure enforcement than AI-specific risk management. Cisco isn't saying throw away traditional security. It's saying AI infrastructure requires an additional layer built for a world where the valuable asset is not just the server or the application — it's the model, the data context, the agent, and the GPU workload itself.

The AI POD concept — pre-validated, modular infrastructure bundles from 32 to 128+ GPUs — is where this architecture meets the procurement conversation. The claim is a 50% reduction in deployment time versus building from scratch. That number comes from Cisco's own validation, not independent benchmarking, so treat it as directional. But the underlying logic is sound: most enterprises don't have the in-house expertise to design, secure, and operate a GPU cluster from scratch. Reducing that integration burden is a genuine value proposition — one that will matter more, not less, as AI deployments scale.

Where the management story is heading

A platform argument lives or dies on operational coherence — whether the thing actually behaves like one system to the people running it. That's where Cisco's management story is still in motion, and worth tracking carefully.

Three tools are relevant: Intersight, Nexus Hyperfabric, and Nexus One. They're related but distinct, and conflating them is a common source of confusion.

Intersight is the broadest: infrastructure lifecycle management across UCS servers, AI PODs, hyperconverged infrastructure, Kubernetes environments, and edge locations. It answers the question — is my infrastructure healthy and configured correctly?

Nexus Hyperfabric is narrower and newer: a cloud-managed network fabric designed specifically for AI cluster workloads, automating the lifecycle from day-zero design through day-two operations. It answers the question — how do I build and run a complex AI cluster network without a team of specialists?

Nexus One is the broader unifying architecture and operating model. Hyperfabric is one expression of it: the cloud-managed fabric model for AI and distributed data-center use cases. The vision is a single interface spanning Hyperfabric, ACI, and NX-OS environments — with AI Canvas for conversational infrastructure troubleshooting sitting on top.

The strategically significant move happened quietly: Hyperfabric support is extending to Nexus 9000/9300-class platforms — a family already widely deployed in enterprise data centers. When Hyperfabric launched, it required new 6000-series hardware, which meant a capital commitment before you could even validate whether the operational model worked. Extending to existing hardware removes that barrier. Enterprises can pilot the operational model on infrastructure they already own, prove the value, and then make new hardware decisions from a position of knowledge rather than faith. That's investment protection. It's also how you dramatically lower the activation energy on adopting something new.

The open questions are real and practitioners should ask them directly. How many production customers are running Hyperfabric alongside existing ACI or NX-OS environments? How clean is the operational handoff between models — what can move, what must be rebuilt, and what is still roadmap? The flexibility claim needs production data to hold up. And the promise of seamless peering across ACI, NX-OS, and Hyperfabric is still on the roadmap rather than fully shipping. Nexus One is the right strategic answer to the operational coherence problem. How much of it is available versus promised will be visible in Las Vegas.

This management layer is also the bridge Cisco needs to extend the platform story beyond the AI data center — out to the branch, the campus, and the distributed edge. That's where the second half of this argument lives, and where the platform claim either becomes whole or shows its limits.

The full-stack ambition — and where it's still PowerPoint

Cisco's story heading into Las Vegas extends the AI Factory from infrastructure up through applications — ISV partnerships, industry verticals, and a tested pathway to business outcomes, not just validated hardware. The ambition is right. The execution timeline is where practitioners should calibrate.

Start with Cisco's own data. Their 2026 State of Industrial AI Report surveyed more than 1,000 industrial professionals globally. Sixty-one percent report AI in live operations. Only 20% have reached scaled, mature deployments. That gap — between "we have AI running somewhere" and "we have AI running reliably at scale" — is precisely the problem the Secure AI Factory is designed to solve. It's also the problem that makes any full-stack story hard to deliver in practice, regardless of vendor.

Add to that the component reality. Memory cost and supply tightness are an industry-wide condition, and Cisco is not immune. Q3 FY2026 earnings reinforced both sides of the story: demand is real, especially in networking and AI infrastructure, but product gross margins remain under pressure. Data center switching orders were up more than 40% year over year — and Cisco raised its full-year AI infrastructure order projection from $5 billion to $9 billion. The architecture is clearly resonating. But enterprises trying to move from pilot to production are still competing for components against hyperscalers operating in a different purchasing orbit.

The gap between the platform story and the shipping reality isn't a fatal problem. It's a normal condition for a company genuinely ahead of the deployment curve. But practitioners should calibrate accordingly: the architecture is real, the operational simplicity is partially real, and some of the most compelling capabilities are still proving themselves in production.

The bottom line

Cisco made a pre-ChatGPT bet on unified silicon that most of the market read as a competitive defensive play. It turned out to be the foundation for something more significant — a genuine architectural moat at the moment AI made networking the center of the infrastructure conversation again.

They've organized that advantage into a full-stack AI platform architecture that addresses what enterprises actually need: not raw GPU performance, but operational simplicity, security depth, and management consistency across a complex and unfamiliar infrastructure. The network-as-AI-fabric thesis is the most defensible positioning Cisco has had in years. It's grounded in technology that was already built before the use case arrived.

The story isn't complete. Some of it is still roadmap. The unified management experience requires the full portfolio in production to deliver fully. And the proof-point data on real enterprise deployments at scale is thinner than the architecture deserves — something Las Vegas needs to change.

The practitioner question isn't whether Cisco's platform argument is coherent. It is. The question is whether the organization can ship fast enough to own the wave it's positioned for. That answer will be clearer in a few weeks.

Between Two Events is an ongoing series analyzing Cisco's strategic narrative between major industry events. This post covers the data center and AI infrastructure story. The next covers branch networking, campus architecture, and the distributed edge — following conversations at Cisco Live US.

Robb Boyd spent nearly two decades at Cisco as Managing Editor of TechWiseTV — the company's highest-ROI marketing asset, reaching audiences in 65+ countries. Today he helps technology companies close the gap between their engineers and everyone else: customers, executives, and the broader audiences that actually move markets. If your technical experts have something important to say but struggle to say it in a way that lands, that's the problem Robb solves — through hosted video series, guided narrative content, and on-camera work that makes complex ideas clear without making them simple.

Want more analysis like this? Subscribe to ExplaiNerds. And if you're a marketing or content leader with a story that deserves a bigger audience — let's talk.